CYBERSECURITY

The Future of Cybersecurity is Punchbowl News’ latest editorial project. This four-part series dives into the issue of cybersecurity, including how it’s playing out in the government and private sector, the legislative prospects and key players to watch. Scroll down to read the series and listen to the latest podcast episode here.

Welcome to the Future of Cybersecurity, Punchbowl News’ latest editorial product — just in time for Cybersecurity Awareness Month.

Cybersecurity is an inescapable part of our lives, from the mundane to the all-encompassing. That’s why we’re devoting the next four weeks to diving deep into the issue. We’ll lay out the cyber legislative landscape, highlight the key players to watch and more.

In many ways, Congress — and the world — has been playing catch-up on cybersecurity. It started out as a way to protect information systems and personal data. But today, it’s a bigger problem, further complicated by the rise of artificial intelligence.

Cybersecurity is a major concern for industry, individuals and government — from simple steps like protecting private text messages on your cellphone to guarding your online accounts with two-factor authentication. It can also entail more sophisticated needs like fortifying a company’s systems against ransomware attacks or hardening 5G networks against potential manipulation.

“I’ve been hacked,” are words no one ever wishes to utter.

There’s a sense on Capitol Hill that after several years of trying to find their footing on cyber, lawmakers are finally starting to make strides. Russia’s interference in the 2016 election was a crucial moment for Congress. It prompted investigations into Moscow’s complex effort, and lawmakers spearheaded the implementation of several safeguards throughout the federal government.

Congress even established a new agency in the aftermath of Russia’s attack — the Cybersecurity and Infrastructure Security Agency.

But the prevalence of AI has essentially reset the clock for lawmakers grappling with cybersecurity concerns as well as significant changes in the workforce. A bipartisan forum on AI that Senate Majority Leader Chuck Schumer hosted in September with tech executives and stakeholders revealed broad concern about Congress’ ability to regulate AI before it’s too late.

We’re excited to jump right into the issue, starting with a dive into what’s happening on Capitol Hill.

The rise of artificial intelligence has added new fears — and some excitement — to the cybersecurity quagmire.

For congressional leaders, the robust debate over whether AI can be cybersecurity’s savior or its worst enemy makes the race for action even more dire as the 2024 election nears.

The memory of Russia’s 2016 interference in U.S. politics is still fresh. And Senate Majority Leader Chuck Schumer says there’s an “immediate” need to pass legislation on AI well before the next presidential election.

Addressing the national cybersecurity workforce shortage—one clinic at a time.

To help thousands of students gain real-world experience for jobs critical to national security, Google in collaboration with the Consortium of Cybersecurity Clinics is awarding over $20M to support the creation and expansion of cybersecurity clinics at 20 higher education institutions.

Similarly, Senate Intelligence Committee Chair Mark Warner (D-Va.) told us he worries AI could make 2016 “look like child’s play” with its manipulation capabilities.

But it’s not all doom and gloom. Lawmakers from both parties say AI carries great benefits and has the potential to supercharge new workforce opportunities and revive certain industries.

All hands on deck: As AI takes centerstage in cyber policy, any meaningful action would require a multi-pronged approach, from Capitol Hill to the White House to Silicon Valley.

Lawmakers in the meantime are convening tech executives and industry stakeholders for their input as they navigate an increasingly complex legislative roadmap. And the White House is working with tech companies to ensure they’re properly managing the myriad risks and opportunities associated with AI’s prevalence.

Source: Google Trends

“These companies are in such a race that I feel like we may be repeating what we’ve done in the past where cyber becomes an after-thought that you have to build into after the tools are created — rather than something that ought to be built in on the front end,” Warner told us.

For so many years, Congress was playing catch-up on cybersecurity policy. There was a massive learning curve for lawmakers who came of age with rotary phones and no internet. Those lawmakers are now some of the leading voices shaping cyber policy in America.

It makes me pretty optimistic about the ability of the U.S. to draft regulation that is going to foster its leadership on technology.

Clément Delangue, CEO of Hugging Face, an AI development platform

“It seems that Congress has finally really discovered artificial intelligence,” Sen. Martin Heinrich (D-N.M.), who founded the Senate’s AI Caucus in 2019, told us recently.

Heinrich had just attended an AI forum he convened with Schumer and Sens. Mike Rounds (R-S.D.) and Todd Young (R-Ind.).

The goal of the forum, which featured high-profile names like Elon Musk and Bill Gates, was to “make sure that all of our colleagues have the fluency in this space that our constituents would want of us,” Heinrich said. Senators also view it as a way to maintain and advance American leadership and innovation among global competitors. Schumer is planning to host several more of these forums.

Clément Delangue, the CEO of Hugging Face, an AI development platform, also attended the forum and said he was encouraged by senators’ questions on how to stay ahead of the United States’ adversaries like China, Iran and Russia.

“It makes me pretty optimistic about the ability of the U.S. to draft regulation that is going to foster its leadership on technology,” Delangue told us. “Because this is not automatic.”

The Senate Intelligence Committee, one of the most bipartisan panels in Congress, would take the lead on legislation to combat foreign interference. The panel has gained a lot of expertise since the 2016 election, when the committee investigated Moscow’s sprawling interference campaign.

Warner worries about a repeat of 2016 – or worse. He said so-called “deep fakes” can make it seem like someone said something they didn’t or make it seem like something occurred that didn’t — and it could all spread on social media like wildfire. This could also have massive implications for financial markets.

The industry itself, Warner noted, has been noncommittal about what specific AI guardrails it would support. The Intelligence Committee experienced the same issue with social media companies like Facebook and Twitter after the 2016 election.

The TikTok twist: There’s another element to cybersecurity policy that captivated Congress earlier this year but seemed to fade shortly thereafter. It’s the rise of TikTok, the Chinese-owned social media platform, and what it means for Americans’ data privacy and the government’s ability to prevent it from being used by Beijing for cyber espionage.

We’ll focus more on legislation in our next edition, but lawmakers in both chambers have authored and debated several different ways to restrain TikTok. Some favor an outright ban, and others want to create a review process that could lead to a prohibition of the app.

Around 100 million Americans, including millions of kids, use TikTok, so this is a cyber debate that touches everyone in one way or another.

To not act would be something we want to avoid. We know it’s going to be hard.

Senate Majority Leader Chuck Schumer

For Congress, crafting cybersecurity policy is no longer a task for the wonks and the industry denizens; it’s increasingly viewed as something that everyone must contribute to.

Of course, passing anything in a divided government is difficult. And this particular divided government is proving to be one of the most challenging in recent memory. Speaker Kevin McCarthy has spent more time dealing with a rebellious right flank than on bipartisan legislation, and there are doubts about next year’s prospects as well.

“To not act would be something we want to avoid,” Schumer said. “We know it’s going to be hard.”

— Andrew Desiderio

In 2022, cyber attacks increased by 38% globally compared to 2021, and have cost the U.S. economy billions. But while the risk of cybersecurity threats continues to grow, the number of cybersecurity workers has not kept up with the pace of demand. The U.S. alone needs to fill more than 650,000 roles to help secure critical systems and infrastructure. Without a robust cybersecurity workforce, these trends will only continue. To help reverse them, in 2023 Google.org in collaboration with the Consortium of Cybersecurity Clinics has committed over $20 million in grants for cybersecurity clinics at 20 higher education institutions across the U.S.

Cybersecurity is one of the few issues that consistently yields bipartisan legislation in Congress. That doesn’t necessarily mean it’s been easy to tackle – or that every, or any, proposal will become law.

The technology behind cybersecurity threats is evolving beyond the speed of Congress. And there isn’t just one committee on the Hill that has jurisdiction over the issue. Not to mention, the legal challenges with regulating such a complicated matter at the federal level.

In this week’s edition, we take a look at the legislative landscape in Congress. Most congressional efforts are focused on three major areas — artificial intelligence, social media and government surveillance.

Protecting Americans from cyberattacks requires a skilled workforce trained to stop them. Cybersecurity attacks are on the rise and there is a shortage of cybersecurity workers. The U.S. has enough workers to fill only 69% of the available cybersecurity roles, according to CyberSeek. To help address this growing concern, Google is partnering with nonprofit organizations, including WiCyS, Raíces Cyber, Girl Security, and Cyberjutsu. Together, we’re training the next generation of cybersecurity professionals to help keep people safe online.

Artificial intelligence: The Senate is taking the lead on tackling AI. Senate Majority Leader Chuck Schumer wants to move legislation sometime next year, in part to protect against interference and misinformation in the 2024 election.

Schumer hosted a forum in September with tech CEOs and industry representatives.

The closed-door meeting was designed to inform lawmakers as they craft a framework that seeks to expand AI’s benefits and mitigate the many risks involved.

Despite Schumer’s lengthy timeline, there are already dozens of legislative proposals that give us a good sense of where Congress might be headed on AI.

A bipartisan framework from Sens. Richard Blumenthal (D-Conn.) and Josh Hawley (R-Mo.) would set up an independent oversight body to monitor and audit certain AI platforms. Blumenthal describes it as a way to address the “promise and peril AI portends.”

Lawmakers are particularly concerned about the possibility of hostile foreign governments using AI to stir chaos in elections and financial markets in the United States and other western countries. The Senate Intelligence Committee is taking the lead on assessing this concern.

Source: Google Trends

But lots of conservatives argue Blumenthal and Hawley’s approach could lead to overregulation that stifles innovation. There’s also worry that new regulatory bodies could be used to infringe on Americans’ First Amendment rights.

“Let’s be clear — AI is computer code developed by humans. It is not a murderous robot,” Sen. Ted Cruz (R-Texas) said. “Unfortunately, the Biden administration and some of my colleagues in Congress have embraced doomsday AI scenarios as justification for expanded federal regulation.”

Social media: After TikTok CEO Shou Zi Chew testified before a House panel in March, there was a sudden bipartisan uprising against the popular Chinese-owned social media app. There’s bipartisan concern about the potential for the Chinese government to use the app to collect Americans’ data and conduct cyber espionage.

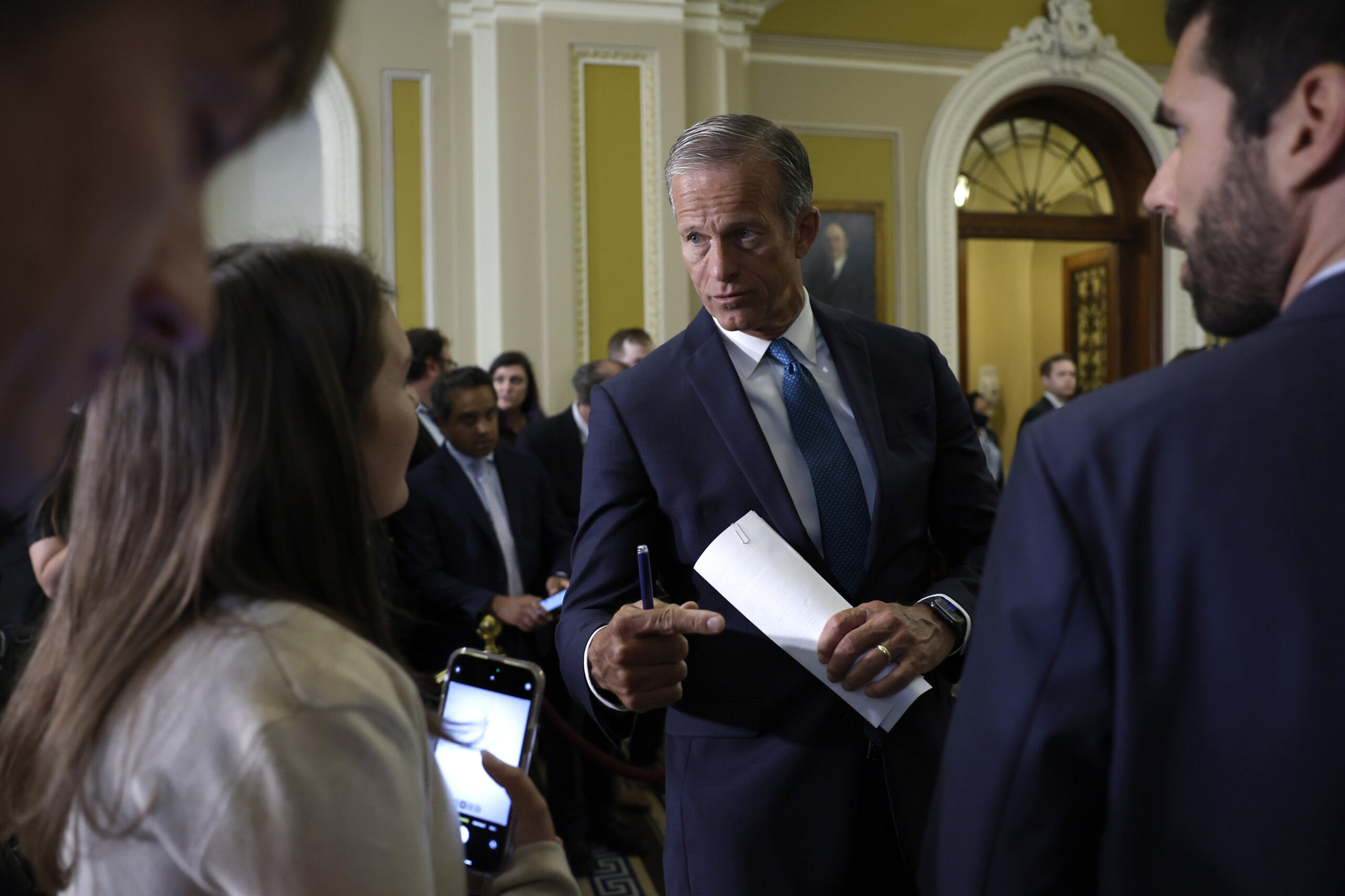

This represents something that both the Trump and Biden administrations agree was a good path forward.

Sen. John Thune (R-S.D.), on a bipartisan bill supporting a review process on foreign tech platforms

The hearing prompted several legislative proposals that include an outright prohibition of the app and a nuanced review process that could eventually lead to a ban of TikTok and other foreign tech platforms.

The latter — a bill introduced by Sens. Mark Warner (D-Va.) and John Thune (R-S.D.) — has the backing of the White House and a broad bipartisan coalition. The legislation is based on an executive order from former President Donald Trump that sought to ban foreign-owned tech but was partially struck down by a federal court.

“This represents something that both the Trump and Biden administrations agree was a good path forward,” Thune said of the bill.

The Senate Commerce Committee is planning to mark it up at some point. Despite the grave warnings about cybersecurity threats posed by TikTok — not to mention the overall sense of urgency — lawmakers on the panel seem to be taking their time.

Unsurprisingly, that hesitance is partially due to Congress’ extreme workload this year. It’s also because, at the leadership level, lawmakers are worried about the almost-certain backlash from the millions of TikTok users in the United States. Many of those TikTokers depend on the app for their businesses.

Government surveillance: Congress has until the end of the year to reauthorize a controversial foreign surveillance program. Commonly referred to as Section 702, the program has served as a critical counterterrorism tool.

But, like most things on Capitol Hill, re-upping Section 702 won’t be easy. Battle lines are already drawn. On one side are civil liberties advocates who want to curtail the program. On the other side, national security hawks who worry those changes could make it harder for the government to thwart foreign terror attacks.

Past reauthorization efforts have resulted in bipartisan odd couples on both sides of the issue. Some of the most liberal and most conservative lawmakers have joined forces to try to limit the government’s ability to use the program to conduct warrantless searches of Americans — such as Sens. Ron Wyden (D-Ore.) and Rand Paul (R-Ky.), for example. We expect that to be the case this time as well.

The Privacy and Civil Liberties Oversight Board, an independent government watchdog, released a highly anticipated report last month on this.

The board recommended that Section 702 be reauthorized only with reforms to protect Americans’ privacy rights. But the two Republican members of the five-person group strongly objected to the report’s findings, underscoring the significant disagreements lawmakers will soon have to confront.

This debate gets at the biggest challenge of crafting cybersecurity policy — balancing the interests of national security with constitutional protections.

We’ll be keeping a close watch on how these legislative efforts progress.

It will ultimately come down to whether lawmakers can get everyone from the tech world, to government, to advocacy groups on the same page.

— Andrew Desiderio

At Google, security has always been at the core of our products. But behind products and tools are the people that develop, manage and innovate them. Global cyberattacks rose by 38% last year, and expanding the cybersecurity workforce is a critical part of protecting against these risks. To help address this growing concern, Google, in partnership with WiCyS, Raíces Cyber, Girl Security, and Cyberjutsu, commits to collaborating on programs focused on building a robust, more inclusive cyber workforce. We will empower the future cyber workforce by sharing our expertise, expanding career pathways, and forming strong industry partnerships to help protect critical infrastructure through nationwide initiatives.

Any substantial action on cybersecurity will require cooperation from a broad coalition. In this installment of The Future of Cybersecurity series, we take a look at the biggest cybersecurity players in Congress, the Biden administration and the industry.

On the Hill, the Senate is taking the lead role in crafting legislation to address the ever-evolving capabilities — both positive and negative — of artificial intelligence.

There’s also widespread evidence that U.S. adversaries like China are using AI to advance their military and economic capabilities in ways that could ultimately prove harmful to U.S. interests. That’s a cause for concern among lawmakers.

With cyberattacks rising by 38% across the globe last year, it’s never been more critical for people, businesses, and governments to come together to keep everyone safe online. That’s why, in support of Cybersecurity Awareness Month, Google and the Cybersecurity Infrastructure Security Agency (CISA) are partnering to share their collective expertise and help address ever-evolving cyber risks.

If and when the legislative efforts shift into high gear over in the House, we’ll be keeping an eye on folks like Reps. Michael McCaul (R-Texas), Anna Eshoo (D-Calif.), Ted Lieu (D-Calif.) and Frank Lucas (R-Okla.), who chairs the House Science Committee.

And the House has a resident AI expert in Rep. Jay Obernolte (R-Calif.), who former Speaker Kevin McCarthy tapped to lead a working group on the issue. Obernolte, who has a master’s degree in AI, is also a vice chair of the Congressional Artificial Intelligence Caucus.

Let’s dive in.

Senate Majority Leader Chuck Schumer

As we’ve chronicled in our previous editions, cybersecurity policy efforts on the Hill are almost exclusively focused on AI, thanks in large part to Schumer and the bipartisan coalition he put together.

Schumer’s message on AI has been consistent: Congress must step up with comprehensive legislation that addresses AI’s great benefits as well as the existential threats it could pose to American society, including financial markets and elections.

Schumer has tasked committee leaders from both parties with finding common ground on legislation that establishes guardrails for AI, especially on ways to mitigate the threats it could pose to American elections. Schumer has cited foreign election interference as a chief reason for Congress to act on AI before the 2024 election.

Sen. Mike Rounds (R-S.D.)

Rounds is Schumer’s main GOP counterpart on the broader AI effort. The pair co-moderated the first AI insight forum in September.

Rounds has a personal connection here that’s also worth spotlighting. His wife died from cancer two years ago, and he has sought to maximize the potential for AI to help accelerate a cure for cancer and other long-term illnesses.

The technology behind AI can “give a doctor more tools” to find and ultimately test different kinds of cures, Rounds told us.

Sen. Marsha Blackburn (R-Tenn.)

Blackburn’s involvement could be crucial in cobbling together the coalition of diverse lawmakers from necessary to get anything meaningful done on AI.

Blackburn is one of the most conservative members of the Senate. And she’s urging Congress to catch up to other countries on what she calls “the world’s hottest policy debate.”

Her focus thus far has been on countering China’s ability to use AI to advance its geopolitical interests around the world, from cyber espionage to its surveillance state and expanded military capabilities.

Sen. Maria Cantwell (D-Wash.)

Cantwell chairs the Senate Commerce Committee, which would play a major role in any AI legislation.

She has emphasized two critical aspects of AI: how it will alter the workforce and how it can be used to deceive Americans online.

Cantwell has compared the need to pass AI legislation to the effort to enact the landmark GI Bill after World War II, which aimed to prepare Americans for the future of the economy. In today’s context, Cantwell argues, that should entail workforce training programs and education about AI’s capabilities.

She has also talked about the need to curb the spread of so-called “deep fakes” on the internet.

Arati Prabhakar, director of the White House Office of Science and Technology Policy

As the president’s top science and tech adviser, Prabhakar effectively leads the Biden administration’s efforts on AI, including the White House’s proposed “AI Bill of Rights” framework.

In recent congressional testimony, Prabhakar indicated that President Joe Biden will soon roll out an executive order centered on AI, but didn’t elaborate.

Jen Easterly, director of the Cybersecurity and Infrastructure Security Agency

Easterly’s job makes her the top cyber official in the federal government.

One of CISA’s chief missions is to safeguard American elections from cyberattacks and efforts by foreign adversaries to use AI to sow chaos and discontent among the American electorate.

Easterly has said AI’s ability to spread misinformation and disinformation could be one of the most detrimental cyber threats ahead of the 2024 elections.

Kemba Walden, acting director, White House Office of the National Cyber Director

Walden helped build the White House’s Office of the National Cyber Director, where she first served as deputy director.

Walden has had a long career in cybersecurity, including as assistant general counsel in Microsoft’s Digital Crimes Unit, at the Department of Homeland Security and as a cybersecurity attorney for CISA.

Walden was instrumental in drafting the Biden administration’s national cybersecurity strategy and developing its National Cyber Workforce and Education Strategy.

Sam Altman

Altman, the CEO of OpenAI, was one of the headliners at the Schumer-Rounds AI forum in September.

OpenAI owns ChatGPT, a language-based AI platform that’s less than a year old but has already been used by hundreds of millions of people.

Importantly, ChatGPT’s source code is a secret. Its owners have suggested that making it open-source could pave the way for an AI “arms race.” This is a significant debate in the AI world right now.

Elon Musk, Mark Zuckerberg, Sundar Pichai — “The CEOs”

Google CEO Sundar Pichai, Meta CEO Mark Zuckerberg and Tesla/X CEO Elon Musk were the big-name headliners at the Senate’s AI forum.

Meta’s language-based AI platform, known as Llama 2, is different from ChatGPT in that it’s all open-source. Zuckerberg has vigorously defended the open-source model. He says it encourages innovation and “improves safety and security because when software is open, more people can scrutinize it to identify and fix potential issues.”

Pichai has said AI is his top priority because it will represent the most important technological shift in any of our lifetimes. Both Pichai and Musk have raised significant concerns about the “arms race” theory. They argue it could create the wrong incentives for companies and ultimately do more harm than good.

— Andrew Desiderio

Google and CISA partner to encourage cybersecurity best practices.

Google is partnering with the Cybersecurity Infrastructure Security Agency (CISA) to share top safety tips to help keep everyone safe online across all their devices and apps. Through a series of quick-and-simple videos, viewers can learn about the four critical safeguards: creating strong passwords and using a password manager, turning on multi-factor authentication, recognizing and reporting phishing, and keeping software up to date.

As concerns about cybersecurity grow, so do the efforts to sway potential policy outcomes.

There’s an intense lobbying campaign on K Street, with tech giants and outside advocacy groups pouring millions of dollars into the issue.

Some of these moves are playing out in prominently featured television and online ads that pop up across the web and social media.

In this edition of The Future of Cybersecurity series, we take a look at the money and influence campaigns that could shape cybersecurity policy, impact the way social media companies operate and affect how they handle user data.

Google has announced the first 10 of 20 colleges and universities to receive $500,000 in grant funding and volunteer mentorship through its Cybersecurity Clinics Fund, in collaboration with the Consortium of Cybersecurity Clinics. The funding will expand existing cybersecurity clinics at these schools, allowing thousands of students to get hands-on cybersecurity experience while helping to protect critical infrastructure such as hospitals, schools and energy grids. Learn more about the schools receiving funding.

Lobbying

The number of organizations lobbying on AI issues ballooned from just a handful in 2013 to more than 120 in the first quarter of 2023 alone, a recent Open Secrets analysis found.

This is a reflection of the rapid pace of the technological advancements behind AI. It’s also a recognition by big tech companies that they need to try to get out in front of Congress and state-level regulators if they’re to have any say in how AI policy is crafted.

For example, Meta spent nearly $10 million on lobbying related to AI issues in the first two quarters of 2023, according to the Senate lobbying disclosure database. The company also lobbies the federal government on general cybersecurity and election security issues, especially since Russia tried to use Facebook to influence the outcome of the 2016 election.

Other tech giants have poured millions of dollars into the issue as well. Amazon spent more than $9 million in the first two quarters this year on lobbying related to AI and cybersecurity issues.

Oracle, the computer software company, spent more than $5 million lobbying on AI, privacy and issues “related to government certification and cybersecurity standards for Cloud Service Providers” in the first half of this year.

And Google Client Services doled out more than $6 million for these types of lobbying efforts during the same time period. Please note that these companies lobby on a range of issues, including privacy, cybersecurity, artificial intelligence and more.

Lobbying groups like NetChoice have expanded their AI efforts in recent years to keep up with the pace of state-level action on the issue. A trade association, NetChoice represents the interests of several major tech companies in their bids to limit the regulation of their platforms. NetChoice does not publicly disclose how much it gets from the companies it represents.

These efforts have extended to other major cybersecurity issues as well, such as the regulation of children’s use of social media.

NetChoice and others have argued that legislation aimed at protecting kids from the harmful effects of social media could have the unintended consequence of undermining privacy rights. NetChoice’s funding, of course, comes largely from the big tech companies, who have a financial stake in this outcome. The exact funding levels aren’t public.

The states

States are also spinning their wheels on AI regulations while the timeline for federal action remains unclear. That has prompted lobbyists to jump into the conversation in state capitals across the country, pushing on everything from AI to election security.

State governments run elections separately from the federal government, so ensuring that voting systems are secure is a big priority at the local level. That often involves allocating millions of dollars to local jurisdictions to enhance their election infrastructure.

It should come as no surprise that many of these state-level lobbying efforts are originating in California, home to Silicon Valley and the myriad of tech companies based there.

Last month, California Democratic state Sen. Scott Wiener introduced legislation imposing transparency requirements on AI companies. And Democratic Gov. Gavin Newsom signed an executive order in September that establishes guardrails for how AI is used in the state’s sprawling government.

More generally, the National Conference of State Legislatures has a task force focused on brainstorming legislative ideas around cybersecurity and AI. The group’s efforts are focused on a range of complex problems like privacy, protection of consumer data and safeguarding state systems from cyberattacks.

In August, the National Conference of State Legislatures released a primer for states with suggestions on how to regulate and apply AI. It also included advice on how to prevent its potential use by malicious actors.

“As state legislators we not only have a role in defining how our state and local governments may responsibly utilize these new AI tools, but also protecting our constituents as they engage with private sector businesses looking to adopt AI,” California Democratic state Rep. Jacqui Irwin, who co-chairs the NCSL taskforce, said in a statement accompanying the report.

On the air

Democrats and Republicans alike have long wanted to crack down on big tech companies. As we wrote in our previous editions, social media regulation is a critical aspect of cybersecurity policy efforts thus far.

Those moves are mostly focused on Chinese-owned TikTok and other social media platforms. That includes curtailing the ability of social media platforms to market to children and ensuring Americans’ sensitive data isn’t being compromised.

Policies that aim to regulate social media, including its potentially harmful impacts on children, are popular among the general public because of the widespread use of those platforms. So focusing on social media in TV ads is sure to grab people’s attention. But efforts to outright ban TikTok have slowed due to fears of a public backlash.

TikTok has spent millions of dollars on TV ads in recent years touting the platform’s benefits. These commercials were prominently featured on major national broadcasts, online news sites and elsewhere on the web earlier this year when Congress appeared closer to banning the app in the U.S. or curtailing its use.

One commercial featured a member of the military who uses the platform to educate military families on their benefits. Another TV ad highlighted a woman who uses TikTok to maintain and grow her business — making the argument that the platform is vital to so many Americans’ economic well-being.

President Joe Biden referenced social media in his State of the Union address in February, saying it was time to pass legislation that stops these companies from “collecting personal data on our kids and teenagers online.” He also called for the banning of targeted ads for children.

These lines from the president prompted a standing ovation from both sides of the aisle — a fact highlighted by the left-leaning super PAC Future Forward USA Action in a TV ad spotlighting Biden’s remarks. The group spent more than $700,000 airing it in the month following Biden’s speech.

Other groups are also targeting consumers through the airwaves. Over the summer, Project Liberty Action Network spent nearly $7 million on a TV ad that showed a young girl sitting at the dinner table staring at her phone, with a narrator saying social media is “fine-tuned to suck them in and steal them away.”

The group, founded by billionaire Frank McCourt, has been promoting social media regulation efforts like the Kids Online Safety Act, a bill that cleared a Senate committee unanimously in July.

This summer alone, Project Liberty has spent nearly $20 million on social media-related TV ads.

While the 2024 elections add urgency to the matter, it could take a while — and coordination with lots of key players — before substantial cybersecurity legislation and regulation are finalized. Whatever the outcome, you can be certain these organizations will have had a hand in it.

— Andrew Desiderio

The University of Nevada Las Vegas and and other institutions receiving grants through Google’s Cybersecurity Clinics Fund will train more cybersecurity students and protect local businesses with robust support from Google. In addition to receiving $500,000 in grant funding, each clinic is being given access to the Grow with Google Cybersecurity Certificate, Titan Security Keys, and volunteer support from Google cybersecurity experts, all at no-cost to their institution.

Applications for the second round of funding are now being accepted from colleges and universities that want to create cybersecurity clinics on their campuses. Learn more about Google’s Cybersecurity Clinics Fund.